Craig Stocks

Well-known member

I've been doing some testing as I get ready for the Milky Way season. I admit I'm somewhat overly focused on star rendering as I'm trying to replicate that feeling you get when standing under a dark sky filled with stars.

I also recently ran across a series of discussions about the Sony's "star eater" problem where it implements single-pixel noise reduction for any exposure of 4 seconds or longer regardless of the LENR setting. The result is that it can see small stars as single pixel noise and erroneously reduce or eliminate them.

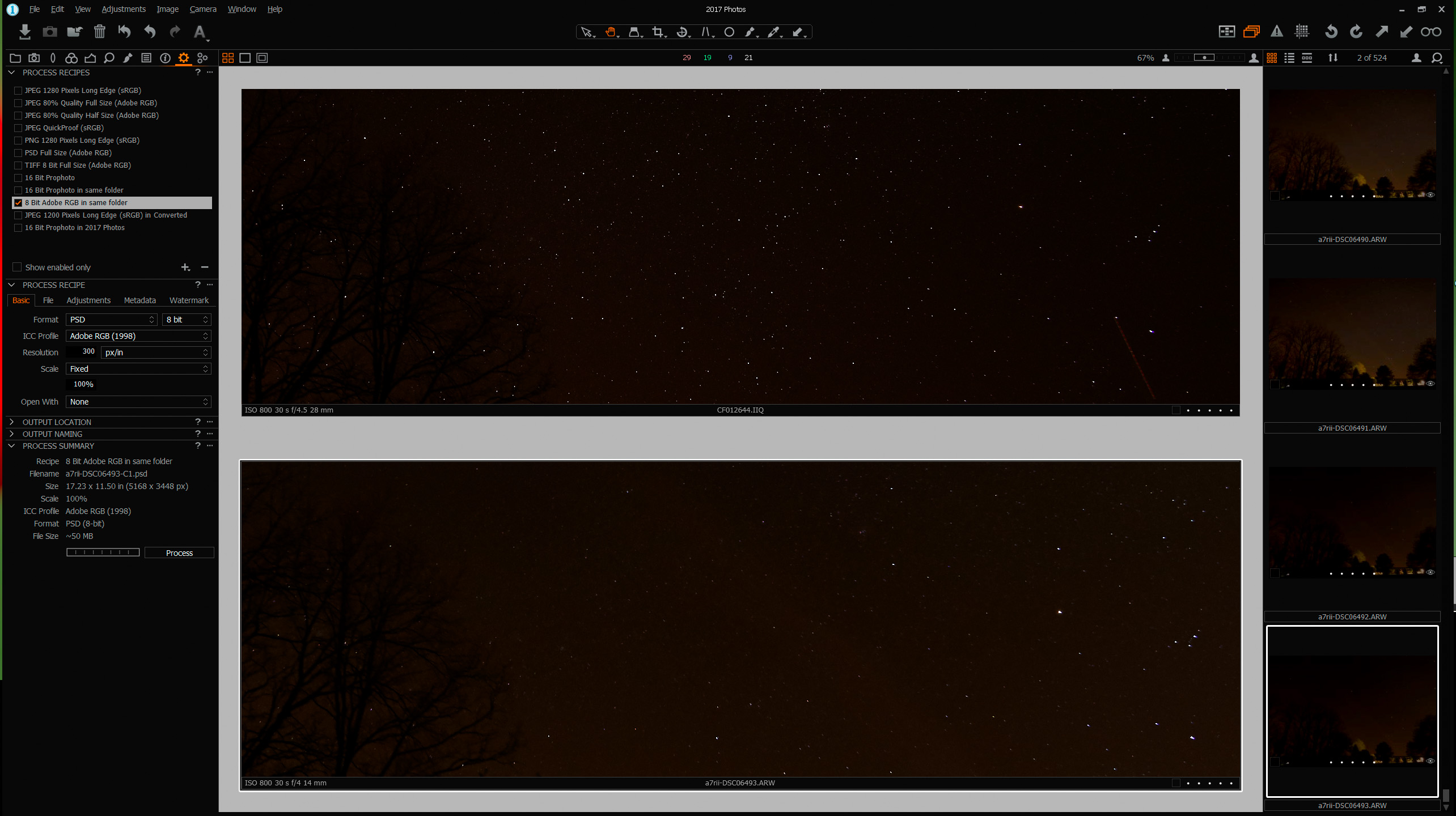

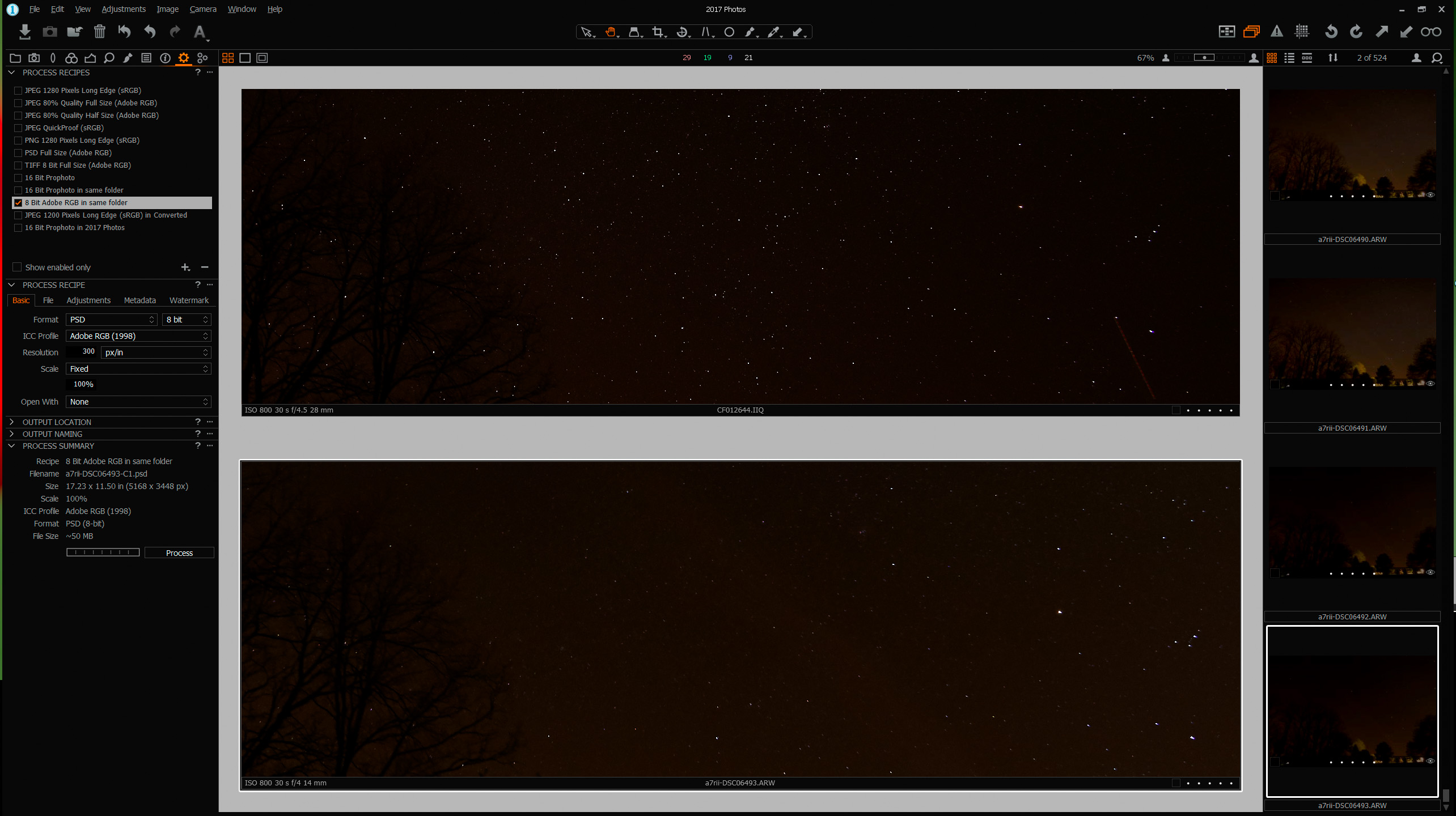

The examples here were shot back to back on my tracking mount with the XF - IQ3100 with 28mm and the Sony a7R2 with Canon 14mm for 30 seconds @ ISO 800, f/4.5 and f/4.0 respectively. Both cameras are very ISO invariant and 800 seems to work as well or better than higher ISO. All LENR was turned off. The sky is from 5 frames stacked in median mode, the foreground is a separate frame with tracking turned off. Similar processing was done in Capture One (for the IQ3100) and Lightroom for the Sony. Both setups have problems with coma and chromatic aberration that I have not addressed.

I've also included a screen shot from C1 showing a single frame from both cameras with not edits applied.

Notice the region above Orion where a dim portion of the Milky Way is visible. The IQ3100 captures a distinct field of tiny stars where the Sony does not. I've suspected the star eater phenomenon in other instances but this seems to show a clearly better result for the IQ3100.

Some other observations.

For a given exposure both cameras seem to record stars at about the same pixel dimension, such as 7 pixels wide for a reasonably bright star. The result is that stars actually print larger from the Sony for the same print size.

Most stars are recorded as pure white. Longer exposures simply record the star as a larger white spec. Of course, the stars don't really vary in apparent size nearly as much as they do in brightness but in photos brighter stars are shown as bigger rather than brighter.

The Sony seemed to do a better job of differentiating star colors but I believe a lot of that is false color from demosaicing small bright objects. Also Lightroom seems to be more prone to false colors around stars that C1.

Tracking and using a higher resolution camera seems to make the challenges worse. For instance it's common for the IQ3100 images to have such small star points that they're lost in the scene where if I use the Sony without tracking I get larger, more visible stars.

I don't know the solutions or best practices. I'm just sharing some observations in the hope of generating some discussion of other approaches.

I also recently ran across a series of discussions about the Sony's "star eater" problem where it implements single-pixel noise reduction for any exposure of 4 seconds or longer regardless of the LENR setting. The result is that it can see small stars as single pixel noise and erroneously reduce or eliminate them.

The examples here were shot back to back on my tracking mount with the XF - IQ3100 with 28mm and the Sony a7R2 with Canon 14mm for 30 seconds @ ISO 800, f/4.5 and f/4.0 respectively. Both cameras are very ISO invariant and 800 seems to work as well or better than higher ISO. All LENR was turned off. The sky is from 5 frames stacked in median mode, the foreground is a separate frame with tracking turned off. Similar processing was done in Capture One (for the IQ3100) and Lightroom for the Sony. Both setups have problems with coma and chromatic aberration that I have not addressed.

I've also included a screen shot from C1 showing a single frame from both cameras with not edits applied.

Notice the region above Orion where a dim portion of the Milky Way is visible. The IQ3100 captures a distinct field of tiny stars where the Sony does not. I've suspected the star eater phenomenon in other instances but this seems to show a clearly better result for the IQ3100.

Some other observations.

For a given exposure both cameras seem to record stars at about the same pixel dimension, such as 7 pixels wide for a reasonably bright star. The result is that stars actually print larger from the Sony for the same print size.

Most stars are recorded as pure white. Longer exposures simply record the star as a larger white spec. Of course, the stars don't really vary in apparent size nearly as much as they do in brightness but in photos brighter stars are shown as bigger rather than brighter.

The Sony seemed to do a better job of differentiating star colors but I believe a lot of that is false color from demosaicing small bright objects. Also Lightroom seems to be more prone to false colors around stars that C1.

Tracking and using a higher resolution camera seems to make the challenges worse. For instance it's common for the IQ3100 images to have such small star points that they're lost in the scene where if I use the Sony without tracking I get larger, more visible stars.

I don't know the solutions or best practices. I'm just sharing some observations in the hope of generating some discussion of other approaches.